Every time you open a new chat window with a Generative AI model, it starts with amnesia. You are forced to re-explain your context, your projects, and your preferences for the hundredth time. The ability to do this well is what’s evolved into “context engineering”, making sure the AI assisting you knows enough about what’s going on to be useful.

The AI vendors know this is a problem, and their solution is predictable: they want to capture your memory within their platform. It’s the ultimate form of vendor lock-in. If they hold all your accumulated context, switching to a better, faster, or cheaper model next month becomes prohibitively painful.

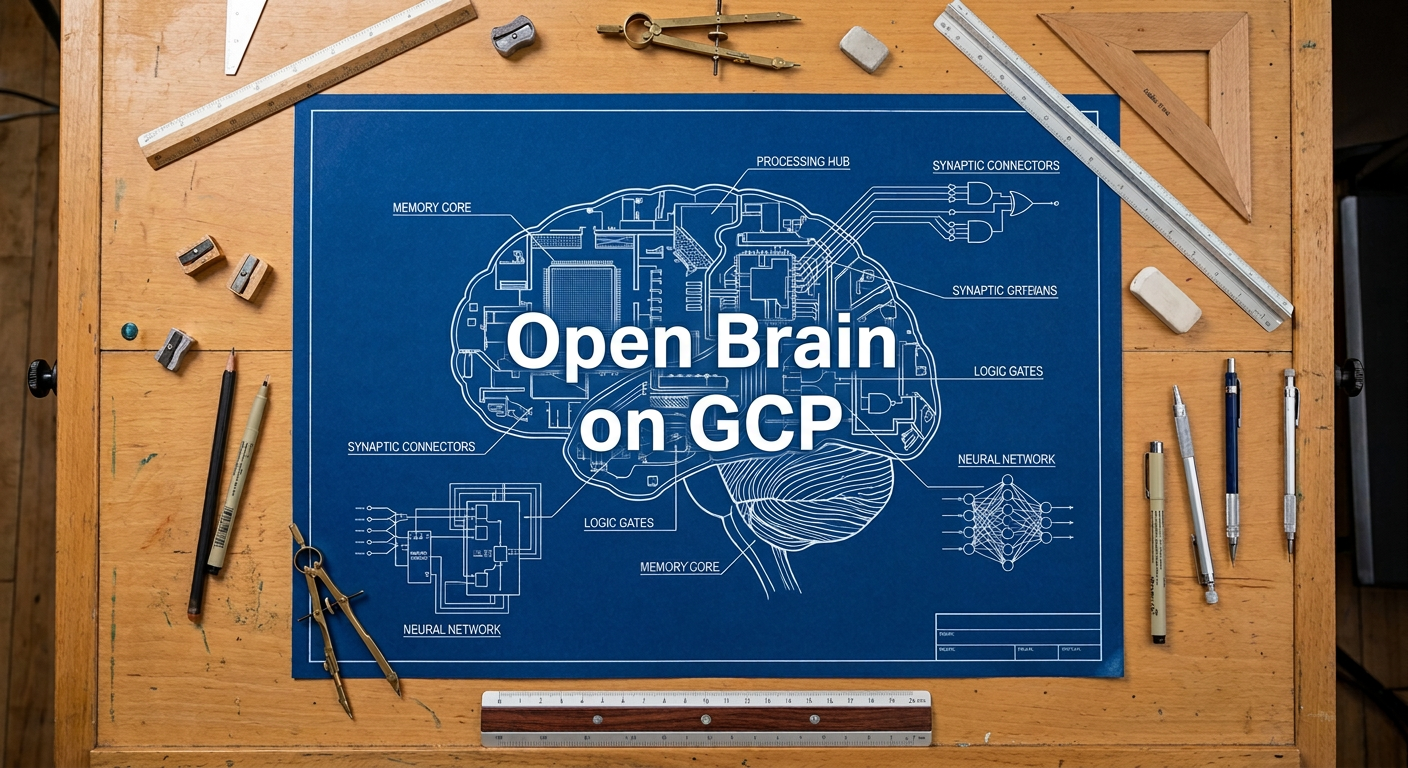

Nate B. Jones recently published a brilliant concept to solve this called the “Open Brain.” His premise is simple but profound: abstract your memory away from the LLM vendor. By building a persistent, database-backed knowledge system that any AI can plug into, your context compounds independently of the tools you use.

I loved the concept. But as a knowledge worker accustomed to designing enterprise architectures, I quickly found that the lightweight tools recommended for a quick setup couldn’t handle the depth of my workflows.

Here is a look at the $0.10, 45-minute on-ramp for those just getting started, the architectural landmines that experienced users will inevitably hit, and the enterprise-grade, GCP-backed Open Brain architecture I ultimately built to solve them.